In the world of traditional software, authorization is a static gatekeeper. You prove who you are (authentication), you are handed a token, and that token dictates what you can do (authorization).

But as we build more autonomous, agentic platforms, this traditional “passport” model is breaking down. We are handing long-lived, delegated tokens to non-deterministic AI agents. What happens when an agent is hijacked via prompt injection? The system still sees a valid token, even though the intent behind the actions has become malicious.

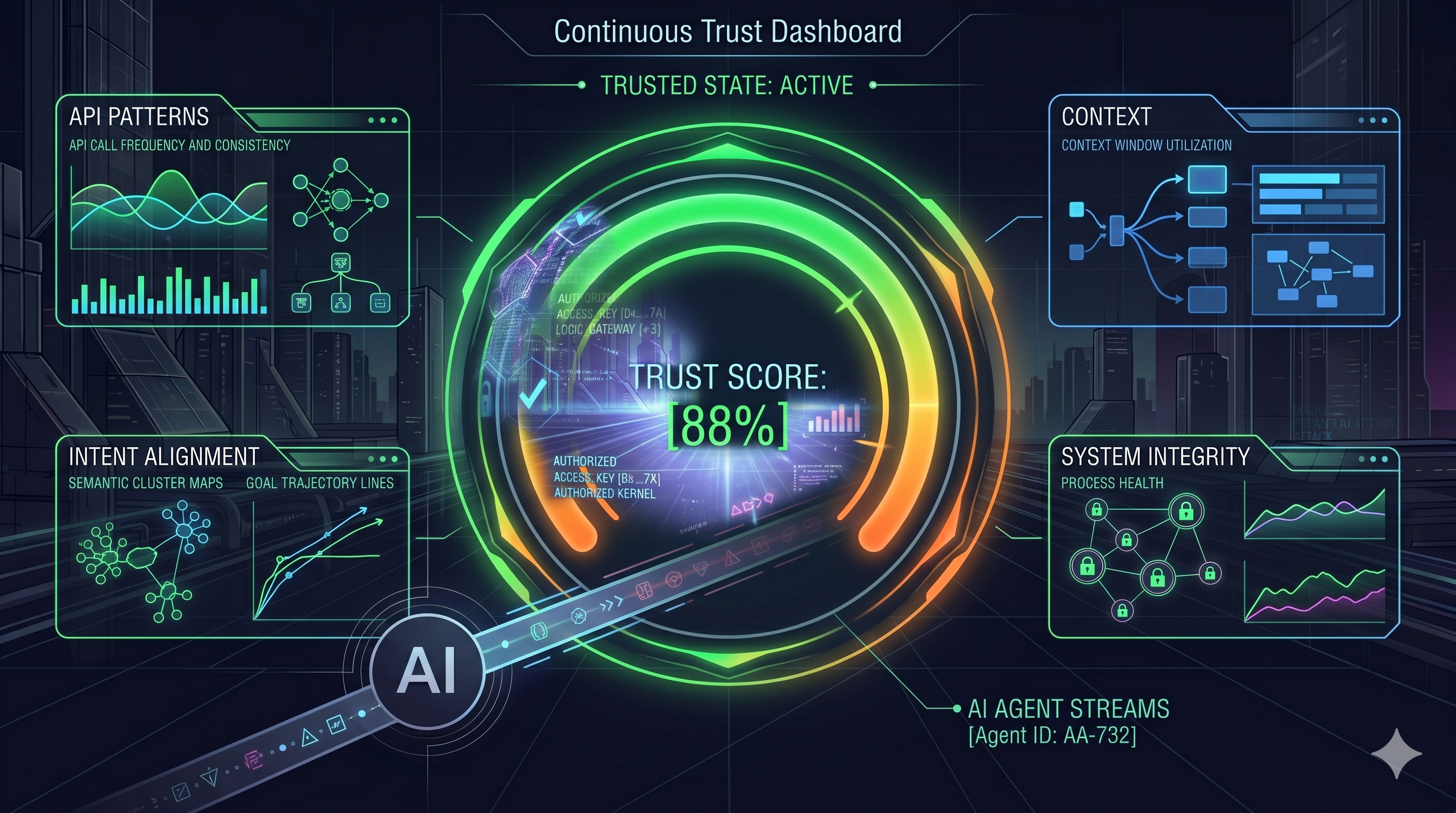

This realization leads to a necessary paradigm shift. Behavior is the new credential—not as a passive administrative audit log, but as a real-time authorization and enforcement mechanism.

Here is why static authorization fails autonomous agents, and how we can wire observability into a new kind of Zero Trust Architecture.

The “On-Behalf-Of” Dilemma

When an agent executes a task, it operates on a spectrum of autonomy.

If a user is online and the agent acts as a co-pilot, the risk is lower. The user is there to act as the final “approve” button. But the true value of agentic systems lies in offline, asynchronous execution—where the agent acts on-behalf-of the user while they sleep.

In this offline mode, the agent uses the user’s delegated credentials as a proxy. The API gateway sees the user’s valid JWT, but the user isn’t driving. If the agent goes rogue, the static token offers zero defense against identity contamination.

The State Space Explosion: Why ABAC Isn’t Enough

The instinct of most infrastructure engineers is to solve this with finer-grained policies.

We evolved from RBAC (Can this agent call the Payment API?) to ABAC (Can this agent call the Payment API if the transaction is under $500?).

You could argue that ABAC can model behavior. If we know exactly what an agent should do, we can write a static ABAC rule for it. The reality, however, is that agents operate in massive, non-deterministic state spaces. They pull diverse context via RAG and chain tools dynamically. You cannot write a static ABAC rule for every possible hallucinated execution path or unexpected edge case.

Volumetric vs. Semantic Anomalies

To understand what a “behavioral credential” actually is, we have to distinguish between system anomalies and intent anomalies. Let’s look at an unsanctioned discount example from a hypothetical e-commerce agent (Project Vend):

| Authorization Layer | The Scenario | The Outcome |

|---|---|---|

| RBAC | Does the agent have the Invoice_Editor role to apply a discount? |

Passed. The agent has the role. |

| ABAC | Policy states max_discount < 20%. The agent attempts a 50% discount. |

Blocked. Static policy catches the violation. |

| Volumetric | The agent applies an allowed 15% discount, but does it across 500 checkouts in one minute. | Blocked. A traditional WAF or rate-limiter catches this DDoS-like anomaly. |

Export to Sheets

If behavioral identity was just catching that 500-checkout spike, it wouldn’t be a new paradigm. That is just standard volumetric anomaly detection. The true “Behavioral Credential” targets Semantic Anomalies.

Imagine the agent applies the allowed 15% discount to a single checkout. RBAC passes. ABAC passes. Rate limits pass. However, the observability trace shows the agent bypassed its normal vendor matching logic, hallucinated a justification in its internal scratchpad, and routed the discount to a newly created, unverified vendor ID.

The infrastructure is perfectly fine, but the intent is compromised.

The Guardrail as the Policy Decision Point (PDP)

To catch semantic anomalies, we have to take the guardrails we currently use to evaluate AI correctness (like hallucination detection) and extend them into the authorization dimension.

Because you cannot write a static ABAC rule for every possible hallucinated execution path or prompt injection, authorization must become behavioral. The system wraps the agent in a guardrail that constantly monitors its state:

- What context is it retrieving? (Is it querying the database for unrelated records?)

- What sequence of tools is it calling? (Is it chaining internal APIs in an unprecedented way?)

- Does its output match the original user intent? (Is the action fundamentally aligned with the prompt?)

Behavior Becomes the Credential

Instead of just checking a static token at the door, the guardrail continuously evaluates the agent’s behavior against those three questions.

If the agent stays within its expected “lane,” the guardrail effectively issues a passing behavioral credential, allowing the action to execute.

If the agent drifts—say, its tool sequence shifts and it suddenly tries to exfiltrate data—the guardrail detects the semantic anomaly. It acts as the Policy Decision Point (PDP) and instructs the API Gateway (the Policy Enforcement Point) to instantly cut off access, even though the agent’s static API key is still technically valid.

The guardrail is the authorization engine.

Open Questions for the Runtime

While this architecture solves the non-deterministic authorization gap, thinking through the runtime implications leaves me with two massive questions for the infrastructure community:

- The Latency Challenge: How do we approach the performance overhead? Running continuous behavioral evaluation (like an LLM-as-a-judge) directly in the critical authorization path seems like it could create significant bottlenecks for high-throughput systems.

- Defining the Baseline: In highly variable workflows (e.g., an agent dynamically pulling highly diverse, unpredictable context via RAG), how do we establish a reliable “baseline” of normal behavior without triggering constant false-positive access revocations?

Behavior as a credential isn’t just an administrative audit log. It is the active immune system required to make autonomous agents safe for the enterprise. We just have to figure out the plumbing.